What is the boundary of a boundary?

Intuitive Homology

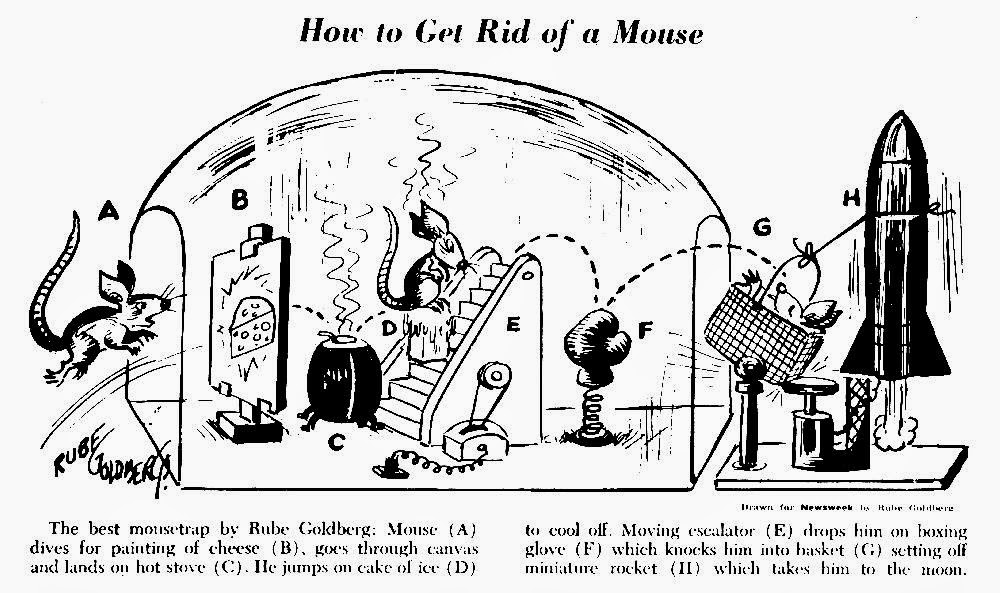

Continuing the geometry discussion, let us start with an

elementary argument: Let us consider a triangle and label its vertex as A, B,

and C. How can we define its boundary? On the first try, it is easy: it is the sum

of the segments: AB, BC, CA. Now

consider a point D outside this

triangle (for definiteness let it be next to segment BC) and construct the quadrilateral:

ABDC. We would want to build up its boundary using the boundary of the two

triangles: ABC and BDC. The problem is that the segment BC is not part of the

boundary of ABDC and we need to add and subtract it at the same time. This

works if we consider oriented segments with

the convention that AB = -BA (here AB means walking from A and arriving at B).

So if A, B, and C are the original vertexes of the triangle, the boundary is: AB,

BC, -AC. Alternatively:

(noA)BC – A(noB)C + AB(noC).

Now this straightforwardly generalizes to a simplex given by vertexes A1 A2,…, An

to the following sum of lower dimensional simplexes:

Σk{(-1)k

A1 A2…Ak-1 Ak+1…An}

And we can then introduce a formal definition of a boundary

of a simplex (A1 A2,…, An):

∂(A1 A2,…,

An) = Σk {(-1)k (A1 A2…Ak-1

Ak+1…An)}

Key question: what

would be the boundary of a boundary of a simplex: ∂ ∂ (A1 A2,…,

An)? In the sum we would have to kill two elements i and j and we can do it in two different way: first kill i then j, or the other way around. For the sake of the argument assume i is smaller than j. What is the sign in front of the two terms?

- In one

case after eliminating the i component

the sign is (-1)i and

after eliminating the j component

is: (-1)j-1 because

the sum became smaller by one making the final answer : (-1)i (-1)j-1 = (-1)i+j-1

- In the

other case after eliminating the j

component the sign is (-1)j

and after eliminating the i component

is: (-1)i making the

final answer : (-1)i (-1)j = (-1)i+j

The key point is that the two terms cancel and the boundary of a boundary is zero.

But why bother with all this, what is the point? This is

essential because any smooth compact and oriented space can be approximated by

small enough simplexes, and that the inner boundaries between simplexes

cancel each other.

In general we want to

uncover the holes in a space because spaces

with different number or kinds of holes are different, and this is a great

tool for distinguishing spaces (for example a doughnut is different than a

sphere).

But how many spaces with holes do we know in physics?

Actually a lot if we think carefully. In physics they usually hide under the

term: boundary conditions. So what? We

want to solve problems (say the electric field distribution in electrostatic),

not classify spaces. But there is a very

deep connection between solving differential equations like Maxwell’s equations

and the classification of spaces. To understand the link we first need to

understand the concept of a hole. In general a hole has two key properties:

- has a boundary

- the boundary of a hole it is not the boundary of anything else

Why it is so? Property (1) is true because you can walk

around a hole in the space which contains it. For property (2) consider a ball.

The ball is hollow precisely when it is not filled. If the ball is filled and

solid, its surface is then the boundary of the material inside.

This will lead to “Ker

modulo Image” and “closed modulo

exact”, a mathematical pattern which occurs again and again in modern

physics. In particular, electric charges are best understood as “cohomology

classes of the Hodge dual of a two form”. This is a mouthful but it has

deep geometric interpretation which unlocks the proper understanding of Yang

Mills theory and the Standard Model. Stay tuned and I’ll explain all this using

only elementary arguments. The subject is a bit dry for now, but hang in there

because the physics payout is great.